Prompt Engineering

Jan 22, 2026

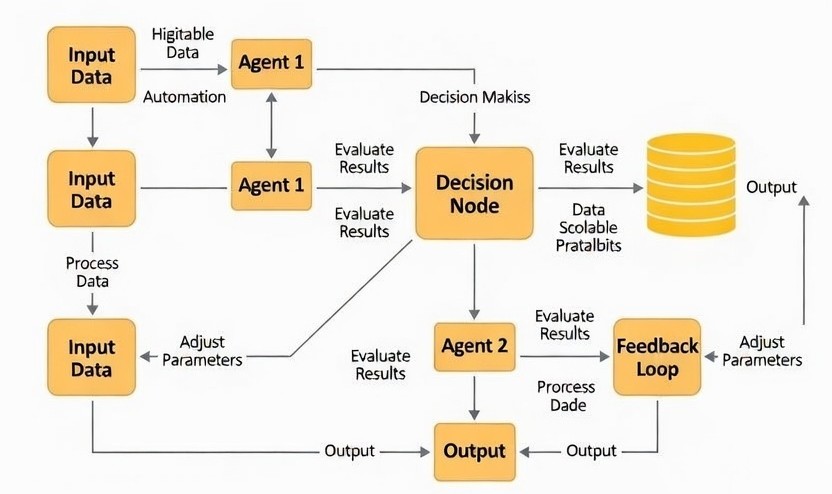

Traditional LLM deployments rely on a single prompt-response architecture. However, complex enterprise workflows require distributed reasoning, task decomposition, and autonomous collaboration between AI components.

Modern agent systems leverage APIs, databases, vector search engines, and code execution environments. Tool calling transforms LLMs from conversational models into operational automation systems.

When architected correctly, multi-agent systems enable scalable automation across customer support, internal operations, analytics workflows, and enterprise knowledge management.